- Home

- Roles and Projects

-

-

Highlighted Roles & Projects

Wavefront Audio

Founder & Inventor

Inventor of core patent-pending technology for a next-generation speaker company.

R&D Movie Studio Manager

Lab Manager & Creative Technologist

Lab Manager & Creative Technologist under Academy Award Winner, Jack Cashin

Ocean Site One

Computer Vision Lead

Lead computer vision engineer for international public event, turning a large format projector into an explorable world

Reverse Projection and 7.1 Surround Sound Outdoor Movie

Projection Mapping & Audio Systems

Custom screen, audio system, projection mapping for a reverse-projection showing of Fantastic Mr. Fox in a theatrical 7.1 audio channel up-mix

F.R.I.D.A. (Friendly Imperial Droid Assistant)

Animatronics & LLM Systems

A Star Wars-inspired animatronic with face tracking eyes and LLM-enabled communication

LLM Cursor: An On Cursor LLM Writing Tool

Real-Time Writing Systems

A real time system-level editing assistant that automatically updates your writing to the desired tone as you go

Deep Gerchberg Saxton

Physics-Informed Neural Networks

A PINN-based deep learning formulation of the Gerchberg-Saxton algorithm for volumetric phase retrieval in immersive displays

Fluid Simulation Engine for Immersive Environments

Volume Rendering & Acoustics

OpenGL Volume Rendering Engine for designing visual displays and acoustic fields in immersive environments

Other Roles & Projects

Ultrasound for Colony Collapse in Bee Hives

Investigating high amplitude ultrasound’s effect on Varroa Destructor pests

Motion Capture Tech for Percy Jackson Musical

Captured Performer Motion for Projected Digital Character Animation

Acoustophoresis (Acoustic Levitation)

R&D in sound-based levitation of objects in immersive displays

Gesture-Based Computer Control

Entirely computer vision based control of computer, including cursor, keyboard, and scrolling

boX: Custom Deep Learning Server

An Ubuntu Server Configuration for Remote Deep Learning Tasks

FISA-B Mobile App Head Manager

While living in India, managed a team of five PHD-Students in developing and shipping a mobile app

Adolescent Expanding Knee Replacements

An oxidation-driven expansion rate in a layered titanium prosthesis for children with knee conditions

Micro-Financing Researcher: Bangalore, India

Researcher with Center for Social Action in Bangalore

-

-

- Education

- Wavefront Audio

- Art, Travel, & Adventure

-

-

Art, Travel, & Adventure

Life and Culture through Surf Photography

An exploration of a selection of my photos chosen to tell stories.

‘Super Complex’ Origami

Some of my favorite origami pieces: modern takes on an ancient art form with intricate design, a single piece of paper, and no cutting or tearing.

Ink Drawing

A collection of my abstract, impressionistic, and geometric ink drawings.

Bringing in Color: Abstract and Impressionistic Digital Art

A collection of my digital art.

Pastel Impressions

Mixed Media Limited Palette Figurative Impressions.

On Causal Force: A Modern Perspective on the Philosophy of Causation

A rigorous work in modern metaphysical philosophy.

Trekking in the Himalayas: SAR Pass

Ice picks, mules, and diseased glacial water.

My Life in India

A glimpse into my life in India at a local university, as a researcher, and mobile application team manager.

Eclectic Adventures

Scuba Diving, Drone Photography, Big Mountain Skiing, Climbing Mt. Fuji, etc.

-

-

- Contact

NATHAN GOLLAY

Compassionate ∩ Curious ∩ Persistent ∩ Innovative ∩ Collaborative

Ocean Site One

Lead engineer for international public event, turning a large format 360° video into a touchscreen

Summary

Ocean Site One is an initiative to draw awareness to the growing concern of decommissioned oil rigs. Through terrible for the environment during their operation, over their lifetime they actually grow to become a staple of marine life; oil rigs are home to some of the most prolific artificial reefs, fostering biodiversity and ocean health at a large scale. Efforts to remove old oil rigs risk destruction of these habitats. Ocean Site One is an immersive international event, most recently reaching as far as the U.K. A 360° video was taken documenting the marine life under an oil rig off the coast of Santa Barbara to be played played on large format projection screens.

My team created technology so that guests could interact with the 360° video- moving the camera view to new directions- with only their hands in the air. This transformed the 360° video into an explorable world, in an immersive, tech-forward experience.

Key Contribution: Developed and deployed end-to-end perception-to-rendering system architecture turning a projected 360° environment into a touchless, gesture-controlled interface using computer vision, under real-time constraints, in a public exhibit.

Skills

Computer Vision Systems ▪︎ Gesture Recognition for Public Installations ▪︎ Touchless Interface Design ▪︎ Immersive and Experiential Media Systems ▪︎ Motion Mapping & Spatial Transformations ▪︎ Projection Mapping & Large-Format Displays ▪︎ 360° Video Interaction Design ▪︎ Technical Storyboarding ▪︎ Real-Time Systems Design ▪︎ Robust Systems for Live Public Events ▪︎ User Experience Testing ▪︎ Bayesian & Classical ML Models for Perception ▪︎ OpenCV ▪︎ Python ▪︎ C ▪︎ VLC ▪︎ Multiprocessing and Multithreading ▪︎ AGILE Development in Interdisciplinary Teams

Highlighted Role

Details:

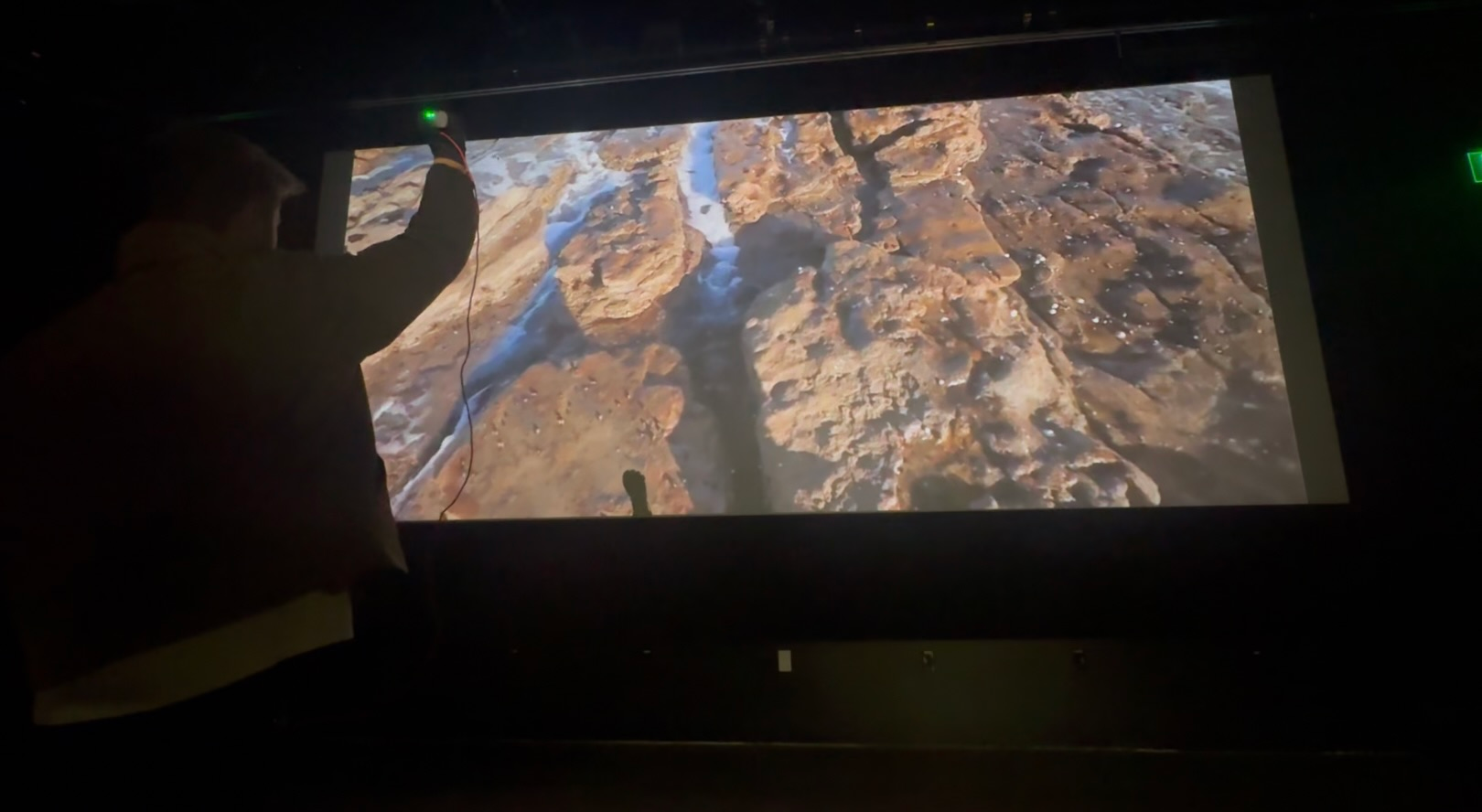

Under the Sea: Event Demo

Under the Sea: Event Demo

A company called Blue Latitudes was contracted out to create this video, for licensed use by Ocean Site One. As part of this, some of our team joined them on the expedition out to the abandoned oil rig in Santa Barbara with its diverse artificial reef. This is the video.

The video is 360°. Feel free to explore by clicking and moving the video around.

I led my team in designing a system to track user’s movements as they approached the large projection screen showing the video. User’s were instructed that they could move the video by holding their hand in the air, grabbing the air (closing their fist), and ‘dragging’ the video to a new perspective. Here is an example of what this movement looks like, demonstrated by yours truly:

Computer Vision

Computer Vision

Guest’s movements were recorded via a USB camera, processed for pose detection, applied gesture recognition, and the results sent to the 360° video player process- all in quasi real time.

Pipeline

Designing the gesture recognition algorithms was AGILE forward, with small incremental improvements, and starting from simple classical machine learning like K-Nearest Neighbor. By far the most important consideration was handling the quaternions, with rotation matrices and hierarchies.

Through testing, it was shown that our Bayesian model for detecting a closed-hand event was most accurate. Once a hand was closed, we abstracted the relative rotation of the hand to the shoulder by applying the complex rotation matrices.

The result of this calculation was projected onto a 2D plane parallel with the projector screen and smoothed with a Simple Moving Average.

Software

Software

Ocean Site one is an event designed to be moved from exhibit to exhibit via a small truck. Thus, the software is designed to run on a powerful personal laptop and efficiency is key. To this end, I employed several strategies and techniques aimed at best effort real time.

Storyboarding & AGILE

Ocean Site One is massively interdisciplinary and tech ambitious. Thus, proper documentation, AGILE workflows, and communication were essential.

I handled storyboarding for the project in addition to leading the Computer Vision development. I managed two Trello boards: one software development and one global. These were tied together so that major milestones were available on both storyboards without overloading the non-technical folk with meaningless talk of quaternions and rotation matrices.

Multiprocessing and Multithreading

There were several blocking operations in the software landscape. In order to run on a typical computer, the final software required good multiprocess and multithreading design.

Interprocess communication was mainly handled with pipes with locally stored stacks and queues. The result was a best-effort real time software: movement to the new location in the 360° video would update as soon as a new value was available, with a tree of similar processes and calculation in order to get there.

This software is a great example to me of a use case of technology towards a creative, immersive experience. In order to curate that immersive experience for guests, the software had to run smoothly, predict their actions, and act in congruence with them. On the other hand, the software needed to be designed so that the only touch point of a staff member is pressing the start or stop button, handling all the complexity of human interaction in between on its own.

Custom 360° VLC Video Player

Custom 360° VLC Video Player

VLC is an open source media player with a massive code repository written in C. While some versions of VLC are capable of playing back 360° video, the gesture recognition process needed access to control the pitch, yaw, and roll of the view programmatically through a custom command API.

VLC Code Base

The VLC is code base is massive. It is around 808 mb, 4,589 files, and 2,632,254 lines of code, mostly in C, and takes around 25 minutes to compile. Even with documentation, it was very difficult even locating the appropriate files that dealt with the viewport and others that with playback.

I include these facts out of a lingering pride for actually having found my needles in the infinite haystack.

Python Bindings

Eventually, I was able to set the pitch, yaw, and roll on the Python side, after the program connected to the correct instance of VLC. The result is control with the simple bit of Python for all three rotation axes.

It turns out that VLC was created with certain intricate limitations of the ratio of mouse movement to viewport updates. I was able to work around this without spoofing the input monitoring on the mouse by identifying an overflow limit and artificially resetting it.

You may also be interested in:

F.R.I.D.A

A Star Wars-inspired animatronic with face tracking eyes and LLM-enabled communication

Founder

Inventor of Core Patent-Pending Technology of a Speaker Manufacturing and IP Company

‘Deep Gerchberg-Saxton’: A Physics Informed Neural Network Architecture

A novel deep learning approach to Volumetric Phase Retrieval problems; applied to immersive displays